The Relationship Between Organizational Structure and Data Quality

by DL Keeshin

February 11, 2026

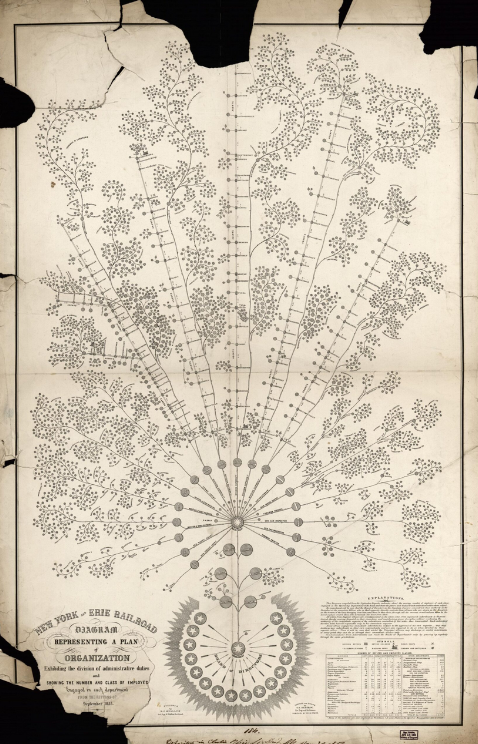

The New York and Erie Rail Road organizational chart from 1855, considered the first modern organizational chart. Source: Library of Congress

In my last blog post, I explored the Organizational Data Flow Architecture approach and how it provides a new methodology for understanding enterprise data through the lens of organizational structure. Today, let's dig into the fundamental relationship between how organizations are structured and the quality of their data.

The relationship between data quality and organizational structure is profound and bidirectional—each shapes and constrains the other in ways that can either reinforce excellence or perpetuate dysfunction.

A Brief Structural History

Organizational structure as a deliberate design problem emerged during the Industrial Revolution. Early factories adopted military hierarchies—clear chains of command, specialized roles, centralized decision-making. Frederick Taylor's scientific management (early 1900s) formalized this into rigid functional divisions: production, accounting, sales. Alfred Sloan's General Motors innovations in the 1920s introduced the divisional structure, allowing semi-autonomous business units under corporate oversight. This worked brilliantly for stable, predictable environments where information flowed slowly and decisions could be made at the top.

The information age shattered those assumptions. Peter Drucker predicted the rise of "knowledge workers" in the 1950s, but the real transformation accelerated with networked computing. Matrix structures emerged in the 1970s-80s trying to balance functional expertise with project needs, though often creating accountability confusion. The internet era brought network organizations, agile teams, holocracy experiments, and platform models. Today's structures attempt to balance specialization with coordination, autonomy with alignment, speed with control—challenges that become exponentially harder as the volume and complexity of organizational data explodes.

This evolution reveals a fundamental tension: as organizations grew more complex and data-dependent, their structures often worked against data quality rather than supporting it. The very divisions designed to manage complexity—functional departments, business units, reporting hierarchies—created boundaries where data fragments, definitions diverge, and accountability dissolves. Understanding how structure drives data quality requires examining these dynamics in detail.

Structural Drivers of Data Quality

Centralized vs. Decentralized Governance

Organizations with centralized data teams often achieve higher consistency and standardization but struggle with responsiveness. The data team becomes a bottleneck—they can't possibly understand every domain deeply enough to catch quality issues at the source. Conversely, federated models where business units own their data can achieve better domain accuracy but often fragment standards, creating integration nightmares downstream.

The sweet spot tends to be a "hub and spoke" model—central standards and infrastructure with domain ownership of actual data assets. But this requires sophisticated coordination that many organizations lack.

Siloed Departments and Data Fragmentation

Functional silos create their own version of truth. Marketing defines "customer" differently than Sales, who defines it differently than Finance. Each builds their own systems optimized for their workflows. The result isn't just technical debt—it's chaos about what things actually mean. When Finance closes the books, they're working with fundamentally different concepts than the product team analyzing user behavior.

This fragmentation directly degrades data quality because reconciliation happens manually, late, and incompletely. The longer data stays within silos, the more it drifts from shared reality.

Accountability Gaps

In most organizations, nobody actually owns data quality. IT owns systems. Business units own processes. Analysts own reports. But the data itself? It's an orphan. This diffusion of responsibility means data quality becomes everyone's problem and therefore no one's priority.

High-quality data requires clear ownership—someone who feels existential pain when data is wrong and has authority to fix it. This rarely maps cleanly to traditional functional structures.

Data Quality's Impact on Structure

Poor Data Forces Organizational Workarounds

When you can't trust centralized data, organizations spawn shadow systems. Excel spreadsheets multiply. Teams build their own databases. What starts as a practical workaround ossifies into parallel infrastructure that further fragments the organization.

I've seen companies where the "official" CRM is essentially decorative—the real customer data lives in a senior business analyst's personal Access database. This isn't just a technology problem; it's a structural adaptation to systemic data unreliability.

Quality Data Enables Flatter Structures

Reliable, accessible data reduces coordination costs dramatically. Teams can make decisions autonomously because they trust the information they're seeing. You need fewer layers of management to reconcile conflicting views of reality because there's actually a shared reality to reference.

Companies with excellent data platforms can operate with more distributed authority—product teams can see real usage data, sales can track pipeline accurately, finance can trust automated reporting. But this only works if everyone believes the numbers.

Data Quality Reflects Power Dynamics

Which data gets attention reveals organizational priorities. Customer acquisition metrics are pristine while customer retention data is a mess? That tells you what leadership actually cares about, regardless of stated strategy.

Similarly, political power flows to whoever controls definitive data. The tension between IT-owned data warehouses and business-owned analytics platforms isn't just technical—it's a struggle over organizational authority.

The kDS Angle

What you're building with kDS is essentially a structural intervention disguised as a technology platform. By automating SME interviews, you're creating accountability where it often doesn't exist—forcing domain experts to articulate what data means, where it comes from, and who's responsible for it.

The real insight is that data discovery isn't primarily a technical challenge—it's an organizational one. The scattered knowledge, unclear ownership, and undocumented context aren't bugs; they're features of how most companies actually operate. Your platform makes the implicit explicit, which is inherently disruptive to existing power structures.

The companies that will get the most value from kDS are probably those experiencing the pain of their current structure—growing fast enough that informal coordination breaks down, or complex enough that siloed approaches create expensive redundancies. The tool works best when there's already organizational recognition that the current approach isn't sustainable.

Looking Forward

Understanding the relationship between organizational structure and data quality isn't just an academic exercise—it's essential for anyone trying to improve data management in complex organizations. The structures we create shape our data, and our data shapes our structures. Breaking this cycle requires tools and approaches that address both simultaneously.

That's the promise of the Organizational Data Flow Architecture approach: not just mapping data, but mapping the organizational meaning and business problems that data is designed to solve.

As always, thank you for stopping by.